40 Terabytes Per Week With Linux-based Clusters at Dunnhumby

It seems reasonable to think that this company tested the open source clustering stuff, but I don’t know for certain. There are folks out there using Open Source cluster filesystems for “large I/O” processing as is apparent in this recent OCFS2 bug report (emphasis added by me):

During maintenance window, decided to use the OCFS2 filesystem to store a large backup file (about 5-10 gig file). SCP’ed the file from an outside server to node1 of the cluster […]

A little third-party perspective is necessary. Not even back in 1990, with Fujitsu Swallow IV drives, was 10GB considered “large.” The OCFS2 user that filed the bug continued:

After a few minutes, node1 crashed.

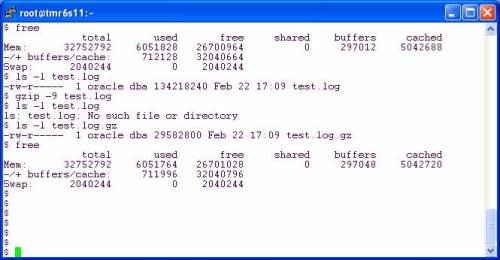

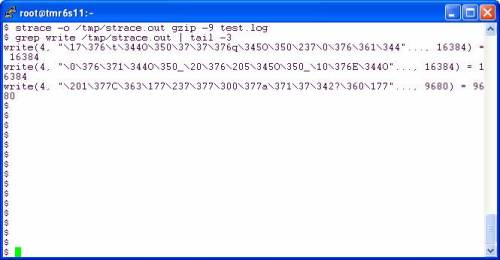

Let’s think about that for a moment. The user is bringing unstructured data into the OCFS2 cluster filesystem using scp (1). Just for the heck of it, let’s take the user at his word and do the math. He said, “After a few minutes.” Let’s say a few minutes are 3—180 seconds. That means the scp(1) was likely not trafficked over Gigabit Ethernet because that would be more like enough time to move about 20GB at full bandwidth with a single wire. That pretty much leaves 100BaseT. So, somewhere along 2GB or so, OCFS2 crumbled. Hmmm, lowered expectations. And the fun continued:

Node1 restarted, but crashed again attempting to reenter the cluster.

Leaving Node1 down, attempted reboot of Node2 and Node3.

Both panic crashed during restart attempting to start OCFS2 and join the cluster.

Eventually, found that we had to start Node1 first, then restart the other two nodes.

Good grief, I’m not even going to comment on that bit, but I will point out that the suggested workaround to use the O_DIRECT enabled coreutils seems off mark. The user is trying to scp(1), not cp(1) or mv(1).

If It Isn’t Free, It’s Junk. Ad Revenue Funds Robust Software Development.

In spite of the fact that Ray Lane says traditional software products are soon to be replaced by cobbled together bits and pieces of open source stuff or what Wharton refers to as “ad supported software”, sometimes the good things in life are not free.

Huge Amounts of Unstructured Data

A recent article in Information Week’s Optimize Magazine covered one of PolyServe’s customers, Dunnhumby. These folks manipulate a lot of data using HP Blades as compute nodes accessing data over NFS in a PolyServe File Serving Utility scalable NAS solution. In their own words:

Each week, more than 40 terabytes of data is generated […]

“Hold it”, you say, that’s a comparison of OCFS2 to PolyServe CFS via NFS. What does OCFS2 have to do with NFS? That is a good question. OCFS2 is proclaimed to be a general purpose filesystem (emphasis added by me):

WHAT IS OCFS2?

OCFS2 is the next generation of the Oracle Cluster File System for Linux. It is an extent based, POSIX compliant file system. Unlike the previous release (OCFS), OCFS2 is a general-purpose file system

So why not export OCFS2 filesystems via NFS? That is the sort of thing you do with a general purpose filesystem after all. And, since OCFS2 is a cluster filesystem there shouldn’t be any second thoughts about exporting the same filesystems from multiple nodes—that’s scalable file serving. In fact, that has been tried before. That URL points to a bug report where a user was trying to implement scalable file serving using OCFS2. He reports:

I’m using OCSF2 for backups and to store files used by nfs clients. We have some errors during three file uploading from remote clients. In that case only one node can access those files but the other node receive from dlm a bad lockres error message […]

Right, OK. So what came next? Read on:

So I tried to stop ocfs2 and o2cb services on the second node but I can’t because heartbeat prevents any stop attempt. A stop attempt on the first node instead hungs and I have to reboot the first node because it is impossible to unmount ocfs2 filesystems (even if I use the lazy option).

I’m sure it couldn’t get any worse, right? He continued:

That is a serious problem because to recover the right functionality I had to reboot the first node (o2cb/ocfs2 services hang and after reboot ASM losts spfiles, so problem impacts even the databases running on cluster). There is any kind of action I can do to avoid that?

Surely he must be doing something really convoluted to hit problems so easily! He explains the scenario:

The scenario is:

node X exports filesystem to host Y

node W exports filesystem to host Z

from Y I create a file then I delete it then ls command on Z lists the file but I cannot open it. I receive I lot of messages like this:

Oct 20 08:53:34 proxb31 kernel: (15612,1):ocfs2_populate_inode:234 ERROR:

Invalid dinode: i_ino=9977187, i_blkno=9977187, signature = INODE01, flags = 0x0

Oct 20 08:53:34 proxb31 kernel: (15612,1):ocfs2_read_locked_inode:389 ERROR:

populate inode failed! i_blkno=9977187, i_ino=9977187

Good grief! Cache coherency problems? You mean like this warning about OCFS cache coherency :

Reasons for using odirect cp:

1. Buffered and direct ios are still racy in the kernel. As Oracle is doing directio, doing a normal cp exposes one to the chance of copying a stale page data.

2. Direct ios are less stressful on the page cache. As Oracle datafiles are invariably large, directio is more efficient in the long run.

3. In a clustered environment, the blocks on disk could be updated by any nodes in the cluster. Using odirect io ensures the latest version of the block is always read.

Oh boy. Anyway, back to the bug report. The bug report states that as of January 4, 2007, there is a patch for NFS exported OCFS2 problem being tested at Oracle, however, the following comment was given to help set expectations:

One thing I’m concerned with is having two clients connect to seperate nodes. Since NFSD is not cluster aware, there may be some issues with unlinked inodes being in cache on one node and looked up on another. Is it possibleto confine your nfs exports to a single node for now, until we can get a better handle on that particular issue.

That seems like something that should have been spelled out in the Product Requirements Document, but I’m old-fashioned.

Scalable File Serving with Linux. Who Needs a Cluster-Aware NFSD?

The NAS heads in a PolyServe File Serving Utility configuration (e.g., HP EFS Clustered Gateway), run the enterprise distributions; RHEL4 and SuSE SLES9. So while those folks in the Ray Lane and Wharton’s open source dream world might think that NFSD cannot function in a cluster with data consistency, PolyServe—with that dying traditional software model—seems to have pulled it off. Do you think Dunnhumby pushes 40TB of data per week through a PolyServe File Serving Utility cluster without NFSD scalability or—more importantly—cache coherency? Not a chance.

Recent Comments