I assert that Oracle over NFS is not going away anytime soon—it’s only going to get better. In fact, there are futures that make it even more attractive from a performance and availability standpoint, but even today’s technology is sufficient for Oracle over NFS. Having said that, there is no shortage of misunderstanding about the model. The lack of understanding ranges from clear ignorance about the performance characteristics to simple misunderstanding about how Oracle interacts with the protocol.

Perhaps ignorance is not always the case when folks miss the mark about the performance characteristics. Indeed, when someone tells me the performance is horrible with Oracle over NFS—and the say they actually measured the performance—I can’t call them a bold-faced liar. I’m sure nay-sayers in the poor-performance crowd saw what they saw, but they likely had a botched test. I too have seen the results of a lot of botched or ill-constructed tests, but I can’t dismiss an entire storage and connectivity model based on such results. I’ll discuss possible botched tests in a later post. First, I’d like to clear up the common misunderstanding about NFS and Oracle from a protocol perspective.

The 800lb Gorilla

No secrets here; Network Appliance is the stereotypical 800lb gorilla in the NFS space. So why not get some clarity on the protocol from Network Appliance’s Dave Hitz? In this blog entry about iSCSI and NAS, Dave says:

The two big differences between NAS and Fibre Channel SAN are the wires and the protocols. In terms of wires, NAS runs on Ethernet, and FC-SAN runs on Fibre Channel.

Good so far—in part. Yes, most people feed their Oracle database servers with little orange glass, expensive Host Bus Adaptors and expensive switches. That’s the FCP way. How did we get here? Well, FCP hit 1Gb long before Ethernet and honestly, the NFS overhead most people mistakenly fear in today’s technology was truly a problem in the 2000-2004 time frame. That was then, this is now.

As for NAS, Dave stopped short by suggesting NAS (e.g., NFS, iSCSI) runs over Ethernet. There is also IP over Infiniband. I don’t believe NetApp plays Infiniband so that is likely the reason for the omission.

Dave continues:

The protocols are also different. NAS communicates at the file level, with requests like create-file-MyHomework.doc or read-file-Budget.xls. FC-SAN communicates at the block level, with requests over the wire like read-block-thirty-four or write-block-five-thousand-and-two.

What? NAS is either NFS or iSCSI—honestly. However, only NFS operates with requests like “read-file-Budget.xls”. But that is not the full story and herein comes the confusion when the topic of Oracle over NFS comes up. Dave has inadvertently contributed to the misunderstanding. Yes, an NFS client may indeed cause NFS to return an entire Excel spreadsheet, but that is certainly not how accesses to Oracle database files are conducted. I’ll state it simply, and concisely:

Oracle over NFS is a file positioning and read/write workload.

Oracle over NFS is not traditional “file serving.” Oracle on an NFS client does not fetch entire files. That would simply not function. In fact, Oracle over NFS couldn’t possibly have less in common with traditional “file serving.” It’s all about Direct I/O.

Direct I/O with NFS

Oracle running on an NFS client does not double buffer by using both an SGA and the NFS client page cache. All platforms (that matter) support Direct I/O for files in NFS mounts. To that end, the cache model is SGA->Storage Cache and nothing in between—and therefore none of the associated NFS client cache overhead. And as I’ve pointed out in many blog entries before, I only call something “Direct I/O” if it is real Direct I/O. That is, Direct I/O and concurrent I/O (no write ordering locks).

I/O Libraries

Oracle uses the same I/O libraries (in Oracle9i/Oracle10g) to access files in NFS mounts as it does for:

- raw partitions

- local file systems

- block cluster file systems (e.g. GFS, PSFS, GPFS, OCFS2)

- ASM over NFS

- ASM on Raw Partitions

Oops, I almost forgot, there is also Oracle Disk Manager. So let me restate. When Oracle is not linked with an Oracle Disk Manager library or ASMLib, the same I/O calls are used for all of the storage options in the list I just provided.

So what’s the point? Well, the point I’m making is that Oracle behaves the same on NFS as it does on all the other storage options. Oracle simply positions within the files and reads or writes what’s there. No magic. But how does it perform?

The Performance is Glacial

There is a recent thread on comp.databases.oracle.server about 10g RAC that wound up twisting through other topics including Oracle over NFS. When discussing the performance of Oracle over NFS, one participant in the thread stated his view bluntly:

And the performance will be glacial: I’ve done it.

Glacial? That is:

gla·cial

adj.

1.

a. Of, relating to, or derived from a glacier.

b. Suggesting the extreme slowness of a glacier: Work proceeded at a glacial pace.

Let me see if I can redefine glacial using modern tested results with real computers, real software, and real storage. This is just a snippet, but it should put the term glacial in a proper light.

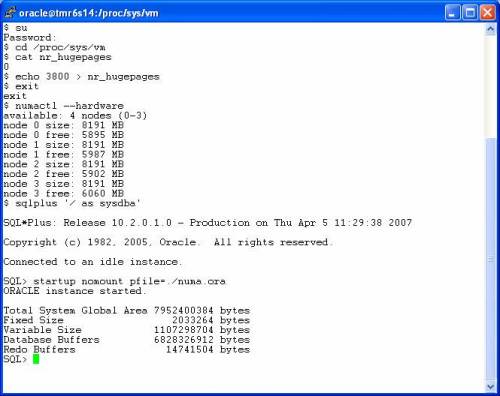

In the following screen shot, I list a simple script that contains commands to capture the cumulative physical I/O the instance has done since boot time followed with a simple PL/SQL block that performs full light-weight scans against a table followed by another peek at the cumulative physical I/O. For this test I was not able to come up with a huge amount of storage so I created and loaded a table with order entry history records—about 25GB worth of data. So that the test runs for a reasonable amount of time I scan the table 4 times using the simple PL/SQL block.

NOTE: You may have to right click-> view the image

The following screen shot shows that Oracle scanned 101GB in 466 seconds—223 MB/s scanning throughput. I forgot to mention, this is a DL585 with only 2 paths to storage. Before some slight reconfiguration I had to do I had 3 paths to storage where I was seeing 329MB/s—or about 97% linear scalability when considering the maximum payload on GbE is on the order of 114MB/s for this sort of workload.

NFS Overhead? Cheating is Naughty!

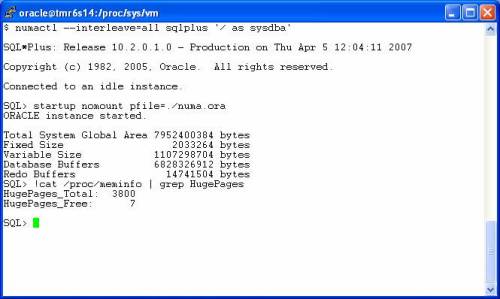

The following screen shot shows vmstat output taken during the full table scanning. It shows that the Kernel mode processor utilization when Oracle uses Direct I/O to scan NFS files falls consistently in range of 22%. That is not entirely NFS overhead by any means either.

Of course Oracle doesn’t know if its I/O is truly physical since there could be OS buffering. The screen shot also shows the memory usage on the server. There was 31 of 32GB free which means I wasn’t scanning a 25GB table that was cached in the OS page cache. This was real I/O going over a real wire.

For more information I recommend:

This paper about Scalable Fault Tolerant NAS and the NFS-related postings on my blog.

Recent Comments